How to Prevent Duplicate Content Issues (Complete SEO Guide)

In the complex world of Search Engine Optimization (SEO), few topics are as misunderstood yet as fundamentally important as duplicate content. At its core, duplicate content refers to substantial blocks of content within or across domains that either completely match other content or are appreciably similar. While it sounds like a straightforward concept, the technical nuances of how it occurs and how search engines handle it can make or break a website’s visibility.

For many years, a persistent myth has circulated that Google hand out “penalties” for duplicate content—shrouding the topic in unnecessary fear. In reality, Google and other major search engines rarely penalize a site unless the intent is clearly to deceive or manipulate search results. Instead, they filter it. When a search engine encounters multiple versions of the same information, it is forced to choose which one is the “original” to display to users.

Read: 5 Reasons Why Your Content Marketing Can Fail

This filtering process matters immensely. If your site is riddled with duplicates, you suffer from ranking dilution, crawl budget waste, and indexing confusion. This guide is designed to demystify these concepts, providing a comprehensive roadmap to identifying, fixing, and proactively preventing duplicate content to ensure your site remains healthy, authoritative, and easy for search engines to navigate.

What is Duplicate Content?

Duplicate content is generally categorized into two distinct types based on its nature and its location: exact duplicates and near-duplicates.

Exact duplicates occur when the content on two different URLs is identical down to the character. This often happens due to technical configurations, such as having a page accessible via both http:// and https:// or having a print-friendly version of an article that exists on a separate URL.

Near-duplicates (also known as “appreciably similar” content) involve pages that have small variations but serve the same purpose. A classic example is an e-commerce product page for a shirt where the only difference between two URLs is the selected color or size in the URL parameter, while the product description and headers remain the same.

Read: Social Network Marketing Strategies & Best Practices

Internal vs. External

Internal Duplicate Content: This happens within a single domain. It is usually the result of how a Content Management System (CMS) handles URLs, categories, or session IDs.

External Duplicate Content: This occurs when two different domains host the same content. This happens through content syndication (legal) or content scraping (illegal/unauthorized).

Common Examples

Same Product, Different Paths: A pair of shoes appearing in both

example.com/shoes/running-shoeandexample.com/sale/running-shoe.URL Parameters:

example.com/products?category=apparel&sort=price_descvs.example.com/products?category=apparel.Trailing Slashes:

example.com/pagevs.example.com/page/.

Why Duplicate Content is a Problem

While you might not get “banned” from Google for having duplicate content, it creates a series of technical and competitive hurdles that can severely handicap your growth.

Read: Smart Ways to Invest Your Money in Internet Marketing

Search Engine Confusion

Search engines want to provide the best experience for users by showing diverse results. If you have five pages with the same content, Google’s algorithm struggles to decide which one is the “canonical” or authoritative version. As a result, it may pick the wrong page to rank, or it may rotate through them, causing your rankings to fluctuate wildly.

Link Equity Dilution

Backlinks are the currency of the web. When other sites link to you, they pass “authority” (often called link juice). If external sites are split between linking to example.com/page, example.com/page/index.html, and example.com/PAGE, your total authority is divided. By consolidating these into one URL, you concentrate all that power into a single, high-ranking page.

Crawl Budget Waste

Search engines assign a “crawl budget” to every site—a limit on how many pages the bot will crawl in a given timeframe. If your site generates thousands of duplicate URLs (common in faceted navigation), the bot spends its time crawling low-value duplicates instead of discovering your new, high-quality content.

Index Bloat and Keyword Cannibalization

Index bloat occurs when a search engine indexes thousands of unnecessary pages, making your site appear “thin” or low-quality. This leads to keyword cannibalization, where your own pages compete against one another for the same keywords, effectively lowering the ranking potential for all of them.

Types of Duplicate Content

To fix the problem, you must first understand the specific technical bucket the duplicate falls into.

1. Internal Duplicate Content

This is the most common form and is usually invisible to the average user.

Multiple URLs: Sometimes the same content is reachable via different logical paths in the site architecture.

Pagination: When “Page 2” or “Page 3” of a blog or product list has meta tags or headers identical to “Page 1,” search engines may view them as duplicates.

Session IDs: Some older systems track users by appending a unique string to the URL (e.g.,

?sessionid=12345). This creates a new URL for every single visitor.

2. External Duplicate Content

Scraped Content: Competitors or “spam bots” copy your content and post it on their own sites.

Syndicated Content: You might legally give a partner site permission to republish your blog post. While beneficial for reach, it can cause the partner’s site to outrank yours if not handled with proper tags.

3. Technical Duplicate Content

URL Parameters: E-commerce filters (sorting by price, color, or rating) create endless URL variations for the same product set.

Trailing Slash vs. Non-Trailing Slash: Servers often treat

domain.com/pageanddomain.com/page/as two separate entities.Case Sensitivity: While rare on modern servers,

example.com/Aboutandexample.com/aboutcan technically be seen as different pages.

Common Causes of Duplicate Content

Understanding the “why” helps in setting up preventative measures.

URL Variations (HTTP vs. HTTPS): If your site moved to SSL but didn’t implement 301 redirects, both versions might be live and indexed.

WWW vs. Non-WWW: If you haven’t set a preferred domain,

www.example.comandexample.comare technically different sites.CMS Taxonomy: Platforms like WordPress allow you to assign a post to multiple categories and tags. This can generate multiple URLs showing the same snippet of text (e.g., the “category” archive page vs. the “tag” archive page).

Faceted Navigation: This is the primary culprit for e-commerce. Filters for size, brand, price, and color can create millions of combinations.

Printer-Friendly Pages: Generating a version of a page specifically for printing often results in a second URL with identical text.

Boilerplate Content: Using the same massive blocks of text (like legal disclaimers or “About the Author” sections) on every single page can sometimes trigger similarity filters.

How to Identify Duplicate Content

Before you can fix the issues, you need to conduct an audit to see what search engines are actually seeing.

Tools & Methods

Google Search Operators: Use the

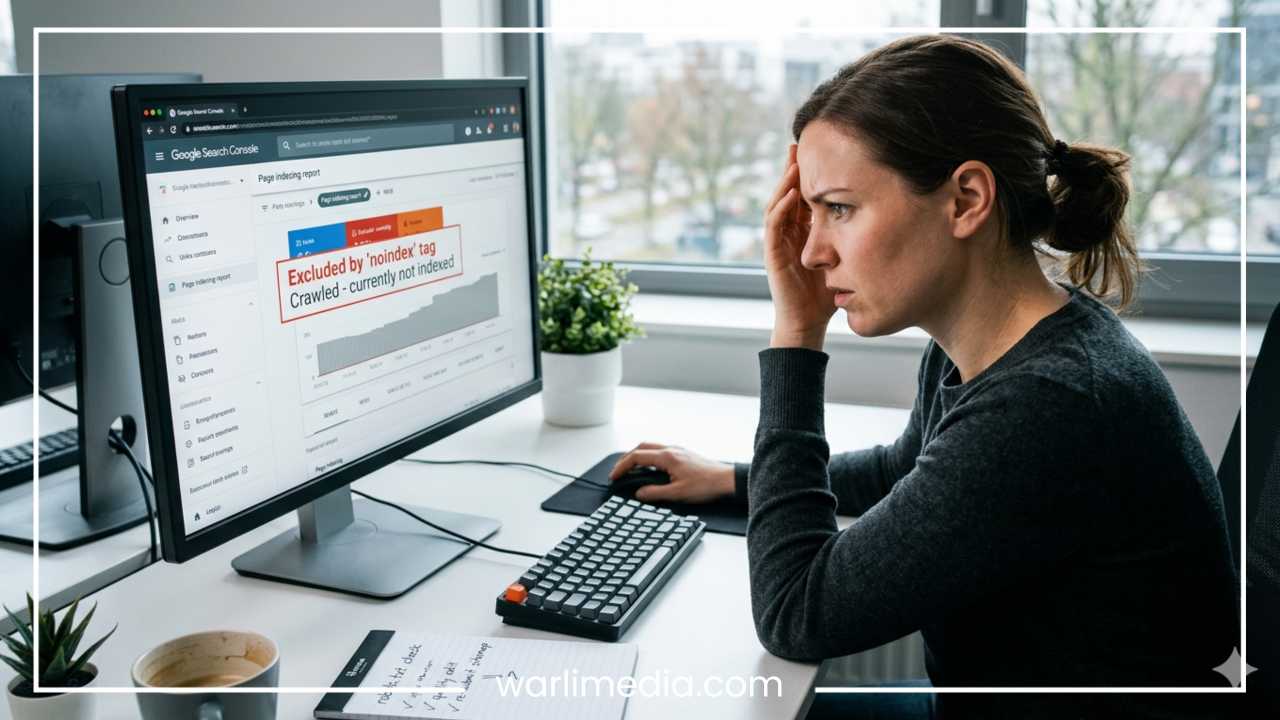

site:operator. Typingsite:yourdomain.com "a specific phrase from your page"into Google can show you how many indexed pages contain that exact text.Google Search Console (GSC): Check the “Indexing” report (specifically the “Excluded” section). Look for entries labeled “Duplicate, Google chose different canonical than user” or “Duplicate, submitted URL not selected as canonical.”

SEO Crawlers: Tools like Screaming Frog SEO Spider are invaluable. They mimic a search engine bot and provide a list of every URL on your site, highlighting identical Page Titles, H1 tags, or content hashes.

Comprehensive Suites: Ahrefs and SEMrush have “Site Audit” features that automatically flag duplicate content and provide a percentage of similarity between pages.

Manual Checks

Always check your indexed URL count against your actual page count. If your site only has 100 products but Google has indexed 1,000 URLs, you have a major duplicate content or “index bloat” issue.

How to Fix Duplicate Content

Once identified, you have several tools in your technical SEO arsenal to resolve these issues.

1. Use Canonical Tags (rel="canonical")

The canonical tag is a snippet of HTML code that tells search engines: “I know there are other versions of this page, but this specific URL is the ‘master’ version.”

Best Practice: Use self-referencing canonicals on all pages. If you have a duplicate, point the canonical tag from the duplicate to the original.

Example: On a filtered product page, the canonical tag should point back to the main, unfiltered category page.

2. 301 Redirects

A 301 redirect is a permanent redirect from one URL to another. This is the preferred method for consolidating authority.

When to use: Use this when you have two URLs that serve no purpose existing simultaneously (e.g.,

example.com/homeandexample.com/index.html).Benefit: It passes nearly 100% of the link equity to the destination page.

3. Use the Noindex Tag

If you have pages that are useful for users but have no SEO value—such as search result pages on your site or specific archive filters—use the <meta name="robots" content="noindex"> tag. This tells Google to keep the page out of its index entirely, preventing it from competing with your main pages.

4. URL Parameter Handling

In Google Search Console, you can occasionally find settings to tell Google how to handle specific parameters. However, the modern approach is to use the robots.txt file to disallow the crawling of specific parameter patterns or to use canonical tags to handle the logic.

5. Content Consolidation

Sometimes, you have “thin” pages that are slightly different but cover the same topic. Instead of trying to keep both, merge them into one “Power Page.” Delete the weaker page and 301 redirect its URL to the new, comprehensive version.

Preventing Duplicate Content

Proactive management is easier than reactive fixing. Implement these strategies during your site setup.

Website Setup

Choose a Preferred Domain: Decide between

wwwandnon-wwwand force all traffic to that version via server-level redirects.Enforce HTTPS: Ensure every

httprequest is automatically upgraded tohttps.

Content Strategy

Unique Descriptions: Especially in e-commerce, avoid using the manufacturer’s default product description. If 1,000 stores use the same description, Google will only rank the one with the highest authority. Writing your own unique descriptions is the best way to stand out.

Meta Data: Ensure every page has a unique Title Tag and Meta Description. This helps search engines distinguish the intent of the page.

Technical SEO

Pagination Logic: Use clear internal linking for paginated series. While

rel="next"andrel="prev"are no longer used by Google as a “hint” for indexing, providing a “View All” page or ensuring each paginated page has a unique URL structure is still good practice.Structured Data: Use Schema markup to clearly define the relationships between your content, which helps search engines understand the context of your data.

Duplicate Content in Special Cases

1. E-commerce Websites

The biggest challenge here is faceted navigation (filters for price, color, etc.). The best approach is often a combination of:

Ajax-based filtering (which doesn’t change the URL).

Canonical tags pointing back to the main category.

Robots.txt blocking the crawl of specific filter combinations that provide no unique value.

2. Blogging Platforms

WordPress and other CMS platforms create “Category,” “Tag,” and “Author” archives. If a post is in the “Marketing” category and also tagged “SEO,” the same post snippet appears on both archive pages.

Fix: Pick one (usually categories) to be indexable and “noindex” the other (tags) to keep the index clean.

3. International Sites

If you have a site in English for the US and English for the UK, the content will be nearly identical.

Fix: Use hreflang tags. These tell Google: “This page is for US users, and this nearly identical page is for UK users.” This prevents them from being seen as duplicates.

Duplicate Content Myths

Myth 1: “Google penalizes all duplicate content.”

Reality: There is no “duplicate content penalty” that suppresses your entire site unless you are engaging in mass-scale scraping or “spun” content meant to manipulate results. Most sites just suffer from poor rankings because Google is confused.

Myth 2: “You must remove all duplicates.”

Reality: Some duplicates are necessary for user experience (like a PDF version of a whitepaper or a printer-friendly recipe). You don’t need to delete them; you just need to manage them with canonical or noindex tags.

Myth 3: “Canonical tags are a directive.”

Reality: Canonical tags are a hint, not a command. If Google thinks your canonical tag is pointing to an irrelevant page, it may ignore it. Always ensure your canonical targets are highly relevant.

Best Practices Checklist

Implement a site-wide 301 redirect for WWW/Non-WWW and HTTP/HTTPS.

Use self-referencing canonical tags on every page of your site.

Write unique product descriptions rather than using manufacturer defaults.

Audit your site quarterly using tools like Screaming Frog or Ahrefs.

Check Google Search Console for “Excluded” pages monthly.

Noindex low-value archive pages (Tags, Date archives, internal Search results).

Consolidate thin content into larger, more authoritative pages.

Final Thoughts

Managing duplicate content is not about avoiding a penalty; it is about providing search engines with a clear, unambiguous map of your website’s value. By ensuring that every URL serves a distinct purpose and that technical “noise” is silenced through redirects and canonical tags, you allow your best content to shine.

SEO is often a game of “marginal gains.” By cleaning up duplicate content issues, you maximize your crawl budget, concentrate your link equity, and ensure that when a user searches for your keywords, Google knows exactly which page to show them. Regular audits and a “canonical-first” mindset will keep your site’s architecture lean and your rankings competitive for the long haul.