How Search Engines Work (In Simple Terms)

Have you ever wondered how Google finds the answer to a obscure question in less than half a second? You type a few words into a search bar, hit enter, and instantly, a list of millions of relevant pages appears. It feels like magic, but it is actually the result of an incredibly complex, highly organized process happening behind the scenes.

Every day, billions of people use search engines to find everything from “how to tie a tie” to “best pizza near me.” Yet, most of us rarely stop to think about the massive infrastructure required to make that possible. The internet is not a single, organized library; it is a chaotic, ever-expanding web of data. Search engines are the tools that bring order to this chaos.

To understand how they work, you can think of a search engine as a giant digital librarian. This librarian has to read every book in the world, remember exactly what is on every page, and then be able to hand you the perfect book the moment you ask a question.

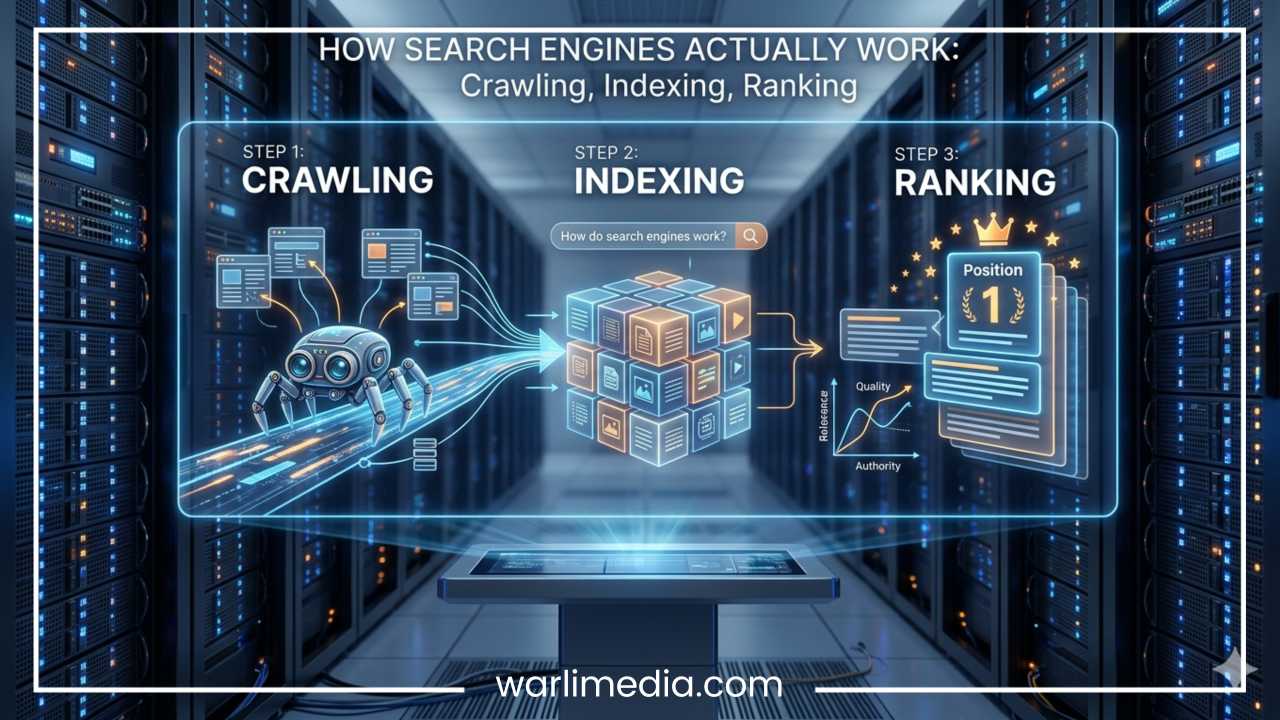

In technical terms, search engines accomplish this through three primary stages:

Crawling: Discovering what content exists on the internet.

Indexing: Organizing and storing that content in a giant database.

Ranking: Deciding which pieces of content best answer a user’s specific query.

In this article, we will break down each of these steps, explore how artificial intelligence is changing the game, and debunk some common myths about how search works.

Read: How to Get Started in the Fashion Business?

What Is a Search Engine?

At its core, a search engine is a web-based tool designed to search for information on the World Wide Web. While we often use “Google” as a verb, it is important to remember that there are many different search engines, including Bing, Yahoo, DuckDuckGo, and Baidu. Each has its own unique way of doing things, but their fundamental goal remains the same: to organize the world’s information and make it universally accessible and useful.

When you type a word or phrase into a search engine, you are performing a search query. The engine then scours its records to provide you with a Search Engine Results Page (SERP). On this page, you will usually see two types of results:

Organic Results: These are the “natural” listings that the search engine deems most relevant to your query based on merit and quality.

Paid Results (Ads): These are listings that businesses have paid to display. They are usually marked with a small “Sponsored” or “Ad” label.

The ultimate goal of a search engine is to provide the best possible answer as quickly as possible. If a search engine consistently gave you irrelevant or low-quality results, you would stop using it. Therefore, search engines are constantly updating their systems to better understand human language and the quality of web content.

“A search engine is like a librarian for billions of web pages, sorting through an infinite stack of papers to find the one sentence you actually need.”

Read: What You Need to Know to Form a New Company?

Step 1: Crawling — How Search Engines Discover Pages

Before a search engine can show you a website, it first has to know that the website exists. This discovery phase is called crawling.

Search engines use automated programs known as crawlers, spiders, or bots. Google’s famous crawler is known as Googlebot. These bots are constantly browsing the internet, 24 hours a day, 7 days a week.

How Crawlers Move Around

Imagine the internet is a vast system of cities and the websites are houses. To get from one house to another, the crawler needs a road. On the internet, those roads are links.

A crawler starts with a list of web addresses from past crawls and sitemaps provided by website owners. As it visits these websites, it looks for links to other pages. When it finds a link, it follows it to a new page, and the process repeats. By following links, the crawler can jump from a blog in New York to a news site in London and then to a shoe store in Tokyo. This is why links are the backbone of the internet; without them, a page is essentially invisible to search engines.

Key Tools for Crawling

To help crawlers find their way, webmasters use a few specific tools:

Internal Links: These are links that connect one page of a website to another page on the same site. They help crawlers understand the structure of a website.

XML Sitemaps: This is essentially a map of a website that tells the crawler exactly which pages are important and where to find them.

Robots.txt: This is a small file on a server that gives instructions to the bots. It can tell a crawler, “You are allowed to look at my blog, but please stay out of my private admin folders.”

Why Discovery Matters

If a website has no links pointing to it from other sites (backlinks), and the owner hasn’t submitted a sitemap, the search engine might never find it. This is why new websites often take some time to appear in search results.

Furthermore, crawlers do not visit every page at the same frequency. Highly popular sites that update their content every hour (like news outlets) are crawled almost constantly. Smaller sites that rarely change might only see a crawler once every few weeks. If a crawler encounters a “broken link” (a 404 error), it hits a dead end, which is why maintaining a healthy website is crucial for being discovered.

Read: How to Use LinkedIn Features to Market Your Business?

Step 2: Indexing — How Search Engines Store Information

Once a crawler finds a page, the next step is to make sense of it. This process is called indexing.

It is a common misconception that when you search Google, you are searching the live internet. You aren’t. You are actually searching Google’s index, which is a massive digital library of all the pages the crawlers have found and “read.”

Processing the Content

When a bot crawls a page, it renders the content. It looks at the text, the images, the videos, and the “metadata” (information in the code that describes what the page is about). The search engine tries to categorize the page based on its topic.

For example, if a page mentions “flour,” “yeast,” “oven temperature,” and “kneading,” the indexer will categorize that page as being about baking bread.

The Indexing Database

Think of the index like the index at the back of a massive textbook. If you want to find information about “Photosynthesis,” you don’t flip through every page; you go to the back, find “P,” and see which page numbers are listed. The search engine index does the same thing on a global scale. It stores billions of words and the web addresses where those words can be found.

Not Everything Gets Indexed

Just because a page was crawled doesn’t mean it will be indexed. Search engines are very picky about what they keep in their permanent library. A page might be skipped if:

It is low quality: The content is very short, gibberish, or provides no value.

It is duplicate content: If the same article appears on ten different websites, the search engine might only index one of them to save space and provide variety to users.

It is blocked: The website owner used a “noindex” tag to tell the engine not to store that specific page.

Essentially, crawled means the search engine saw it; indexed means the search engine remembered it and is willing to show it to users.

Step 3: Ranking — How Search Engines Decide What Appears First

This is the stage where the magic happens. There might be 50 million indexed pages that mention the word “pancake recipes.” How does the search engine decide which ten to show on the first page?

This is determined by algorithms—complex mathematical formulas that weigh hundreds of different factors to determine which result is the most helpful for the user. While the exact “secret sauce” of these algorithms is a closely guarded secret, we know the most important categories they look at.

Relevance

The first thing the engine asks is: “Does this page actually answer the user’s question?” It looks for keywords in the title, the headings, and the body text. If you search for “blue running shoes,” the engine will prioritize pages that frequently use those specific terms in a meaningful way over pages that just talk about “shoes” in general.

Quality and Authority

Search engines want to show trustworthy information. One of the biggest ways they measure this is through backlinks. If a hundred high-quality websites (like the New York Times or a major university) link to a specific article, the search engine assumes that article must be an authoritative source. In simple terms, a link is like a “vote” of confidence.

User Experience (UX)

A search engine won’t rank a page highly if it provides a bad experience for the user. Factors here include:

Mobile-friendliness: Does the site look good on a smartphone?

Page Speed: Does the site load in a second, or does it take forever?

Navigation: Can a user easily find their way around, or is the site a mess of pop-up ads and broken buttons?

Freshness

For some searches, time is of the essence. If you search for “World Cup scores,” you don’t want a perfectly written, authoritative article from eight years ago. You want the results from today. The algorithm recognizes “freshness” as a priority for news, sports, and trending topics.

Search Intent

Modern search engines are very good at understanding why you are searching for something. This is called Search Intent. There are generally three types:

Informational: You want to learn something (“how to fix a leak”).

Navigational: You are trying to find a specific website (“Facebook login”).

Transactional: You are ready to buy something (“buy wireless headphones”).

If you search for “pizza,” the engine uses your location to show you nearby restaurants (transactional/local intent). If you search for “history of pizza,” it shows you articles and encyclopedias (informational intent).

What Happens in Less Than a Second?

To appreciate the scale of this technology, let’s look at the timeline of a single search. When you type “why is the sky blue” and hit enter, the following happens in roughly 300 to 500 milliseconds:

Request: Your query is sent to the search engine’s servers.

Lookup: The engine doesn’t search the web; it searches its massive index.

Filtering: It identifies every page in the index that contains the words “sky” and “blue.”

Algorithmic Evaluation: The ranking algorithm applies hundreds of rules to those pages. It checks which ones are from scientific sources, which ones have the best images, and which ones load the fastest.

Snippet Generation: The engine pulls a small snippet of text from the best pages to show you a preview.

Display: The results are sent back to your screen.

All of this happens faster than you can blink. It is a feat of engineering that involves thousands of computers working in parallel across data centers all over the world.

Why SEO Exists

Because the difference between being on the first page of results and the second page is massive (most people never click on page two), a whole industry was created to help websites rank better. This is called Search Engine Optimization (SEO).

SEO is the practice of making a website more attractive to search engines. It generally falls into two categories:

White-Hat SEO (The Good Way)

This involves following the search engine’s guidelines to provide a better experience for users. It includes:

Writing high-quality, original content.

Making sure the website loads quickly.

Using clear titles and descriptions.

Building a site that is easy to navigate on a phone.

Black-Hat SEO (The Bad Way)

These are “cheating” methods used to trick the algorithm. Examples include keyword stuffing (writing the same word a thousand times in hidden text) or buying fake links from “link farms.” Search engines are very good at catching these tricks, and they will “penalize” or even ban websites that use them.

The goal of SEO isn’t just to “beat the system,” but to make it as easy as possible for the search engine to understand that your website is a high-quality answer to a user’s question.

How AI Is Changing Search Engines

The world of search is currently undergoing its biggest transformation since its inception, thanks to Artificial Intelligence (AI) and Machine Learning.

In the early days, search engines were “literal.” If you searched for “best small dogs,” the engine just looked for those exact words. If a great article used the phrase “top tiny canines,” the engine might miss it because the words didn’t match perfectly.

Semantic Search

Today, search engines use AI to understand semantics—the actual meaning and context behind words. They know that “small dogs” and “tiny canines” mean the same thing. This allows the search engine to act more like a human conversation and less like a computer database.

AI Overviews and Generative AI

We are now seeing the rise of AI-generated summaries at the top of search results. Instead of just giving you a list of links, the search engine might use AI to read the top five websites for you and write a three-sentence summary of the answer. This is particularly useful for complex questions that don’t have a single “right” answer.

Voice and Visual Search

AI also powers Voice Search (like asking Siri or Google Assistant a question) and Visual Search (taking a photo of a plant to find out what species it is). These technologies rely on deep learning to process sound waves and images, translating them into data the search engine can index and rank.

Common Myths About Search Engines

Because search engines are so central to our lives, many myths have popped up about how they work. Let’s clear a few up.

Myth: Google searches the whole internet live.

Truth: As we learned, you are searching an index, which is a snapshot of the web. If a website changed five minutes ago, the search engine might not know until the next time it crawls that site.

Myth: Paying for ads helps your organic ranking.

Truth: There is a “church and state” separation between the advertising side and the search side. Paying for Google Ads will not make your website rank higher in the natural, organic results.

Myth: More keywords are always better.

Truth: This is an old tactic. Today, if you repeat the same keyword too many times, the search engine will actually lower your rank because it looks like spam. It is much better to write naturally for human readers.

Myth: The first result is always the most accurate.

Truth: The first result is the one the algorithm thinks is the best match. While they are usually very accurate, search engines can be fooled, or they may prioritize a popular but incorrect source over an obscure but correct one. Always check your sources!

Final Thoughts

Every time you perform a search, you are setting off a chain reaction involving trillions of lines of code and massive warehouses of servers.

From the crawlers that tirelessly explore the web, to the index that stores the world’s knowledge, to the algorithms that rank information in the blink of an eye, search engines are a marvel of modern technology. They have turned the entire internet into a searchable, usable tool for everyone on the planet.

Understanding how they work is more than just a technical lesson; it helps us become better at finding information and more aware of the digital world around us. While the technology will continue to evolve—incorporating more AI and becoming even more conversational—the core mission remains the same: helping you find the right information at the exact moment you need it.

Every search may feel instant, but behind that simple search bar is one of the most advanced and sophisticated systems ever built by human beings.

Frequently Asked Questions About How Search Engines Work

To help you get the most out of this guide, we have compiled a list of common questions that many users and website owners ask. These address specific “long-tail” queries that people often search for when trying to understand the deeper mechanics of the web.

Why is my website not showing up on Google search results?

There are several reasons why a site might be invisible. First, check if your site has been crawled and indexed. If the site is brand new, search engine bots might not have discovered it yet. Another common issue is a “noindex” tag hidden in your website’s code, which tells search engines to stay away. Finally, if your content is considered low quality or if you have violated search engine guidelines, your site might be intentionally excluded from the results.

How long does it take for a new page to be indexed?

The time it takes for a new page to appear in search results can vary from a few hours to several weeks. Large, high-authority websites that post frequently are often crawled within minutes. For smaller or newer websites, the process usually takes longer. You can speed this up by submitting your XML sitemap directly to search engine tools or by linking to the new page from an already established, high-traffic page on your site.

What is the difference between crawling and indexing in SEO?

While people often use these terms interchangeably, they are distinct steps. Crawling is the discovery phase where a bot follows links to find new or updated content. Indexing is the storage phase where the bot analyzes that content and saves it into a massive database. Think of crawling as the librarian finding a new book, and indexing as the librarian actually putting that book on a shelf and recording its location in the catalog.

Do search engines look at images and videos too?

Yes, search engines are increasingly sophisticated at “reading” multimedia content. They use Alt Text (descriptions written in the code), file names, and the surrounding text on the page to understand what an image or video is about. With the advancement of AI and computer vision, engines can now even identify objects within an image or transcribe the audio in a video to better categorize the content.

Can a website be removed from a search engine index?

Absolutely. A search engine can “de-index” a site if it discovers black-hat SEO tactics, such as hidden text, malware, or deceptive redirects. Additionally, a website owner can request a removal if a page contains sensitive personal information or outdated content. If a site goes offline for a long period and returns a “404 Not Found” error repeatedly, the crawler will eventually remove that page from the index to keep the search results clean.

Does social media activity affect search engine rankings?

While social media likes and shares are not direct ranking factors (meaning a popular tweet won’t automatically make your website rank higher), there is an indirect benefit. High social media engagement leads to more people seeing your content, which increases the likelihood of other websites linking to you. Since backlinks are a major ranking factor, a strong social media presence can definitely help your overall visibility in the long run.