The History of Google Algorithms: From PageRank to AI Search

In the early days of the internet, finding information was an exercise in frustration. Search engines were essentially digital directories that relied on simple keyword matching. If you typed a word, the engine looked for pages where that word appeared most frequently. This era of the web was easily manipulated, leading to a poor user experience dominated by “keyword stuffing” and irrelevant results. Enter Google—a company that transformed from a research project into the ultimate gatekeeper of the world’s information.

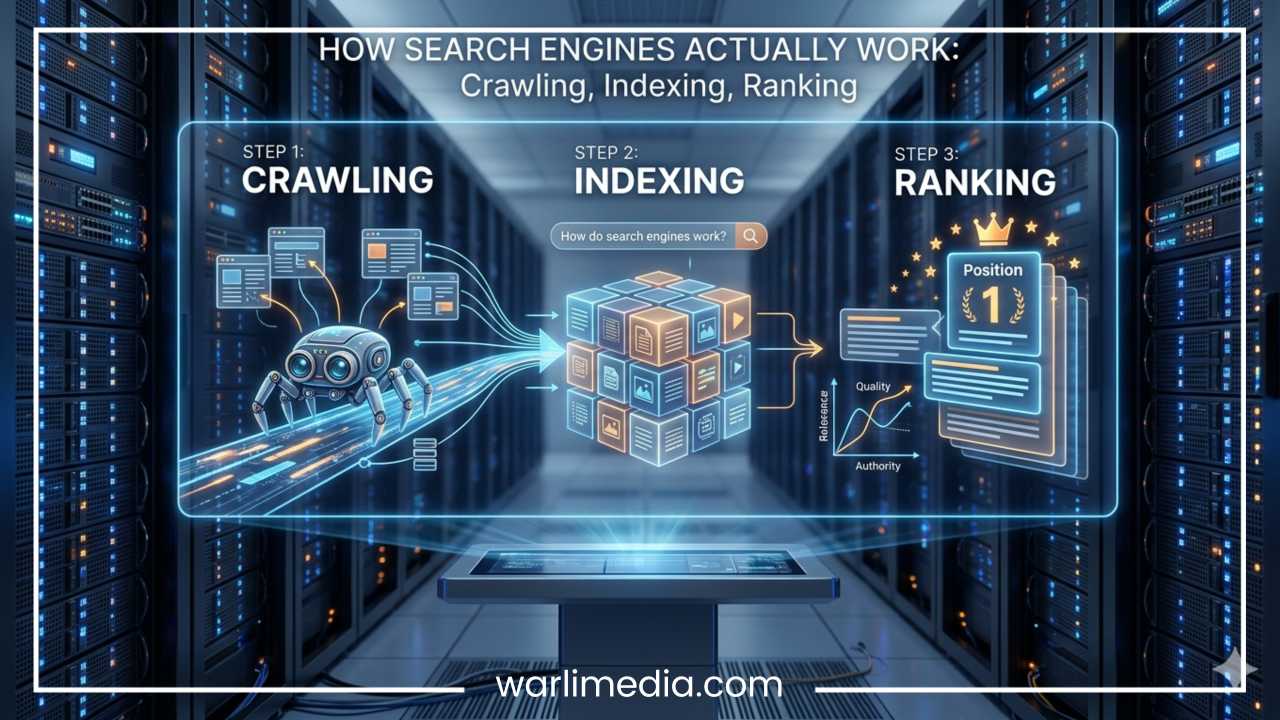

Google’s algorithms are the complex mathematical formulas and processes that the search engine uses to retrieve data from its search index and instantly deliver the best possible results for a query. Over the decades, these algorithms have evolved from basic link-counting mechanisms into sophisticated artificial intelligence systems. Google updates its systems thousands of times per year, with major “core updates” shifting the digital landscape overnight.

For SEO professionals, marketers, and website owners, understanding the history of these algorithms is not just a lesson in nostalgia; it is a roadmap for the future. By tracing the trajectory of these changes, we can see a clear pattern: Google has evolved from a keyword-matching search engine into an AI-driven answer engine. The goal has remained consistent—to connect users with high-quality, relevant content—but the methods have become infinitely more nuanced.

Read: Using Promotional Bags to Market Effectively

The Birth of Google and PageRank

In 1998, Larry Page and Sergey Brin, two Ph.D. students at Stanford University, published a paper that would change the world. They introduced Google, a search engine built on a revolutionary concept called PageRank. Before PageRank, search engines like Yahoo and AltaVista ranked pages based on on-page content and metadata. Google’s breakthrough was the realization that the structure of the web itself—the links between pages—could serve as a proxy for importance.

How PageRank Changed Search

PageRank was based on the idea that a link from one website to another was effectively a “vote” of confidence. However, not all votes were equal. A link from a highly authoritative site carried more weight than a link from an obscure blog. This introduced three critical concepts to the search landscape:

Link Authority: The “power” passed from one page to another (often called Link Juice).

Relevance: The context of the linking page in relation to the linked page.

Trust Signals: The reliability of the source based on its own backlink profile.

By prioritizing pages that other people found valuable enough to link to, Google provided significantly better results than its competitors. This objective, algorithmic approach allowed Google to scale rapidly, eventually making it the dominant force in search. It was the first time a search engine moved away from human-curated directories toward a democratic, merit-based system of ranking.

Read: How to Prevent Workplace Violence?

Early Algorithm Updates

As Google’s popularity grew, so did the efforts of webmasters to “game” the system. The period between 2000 and 2005 saw the first major battles between Google’s engineers and “black-hat” SEOs who used deceptive tactics to inflate rankings.

Florida Update (2003)

The Florida update is often cited as the first major SEO crackdown. Before Florida, it was common practice to repeat a term hundreds of times in invisible text—matching the color of the text to the background of the page—to fool the engine. Florida decimated the rankings of sites using these tactics. It was a wake-up call for the industry, marking the end of the era where simple technical manipulation could guarantee a top spot. It particularly impacted affiliate marketers who relied on low-quality bridge pages.

Austin Update (2004)

Hot on the heels of Florida, the Austin update targeted more sophisticated forms of deception. It went after link farms (groups of websites that exist only to link to each other) and hidden text. It also began penalizing metadata abuse, where webmasters would fill meta tags with irrelevant but high-volume keywords to siphon off traffic from unrelated searches.

The Nofollow Attribute (2005)

By 2005, comment spam had become a plague. Spammers were using the comment sections of popular blogs to drop links to their sites, hoping to siphon off PageRank. Google collaborated with other search engines to introduce the rel="nofollow" attribute. This told search engines not to pass authority through those specific links. It was a pivotal moment in link-building ethics, drawing a clear line between organic editorial links and user-generated or paid content.

Read: Five Businesses That Started in a Garage

Universal Search and Personalization

By the mid-2000s, Google began moving beyond the “10 blue links” model. The introduction of Universal Search in 2007 integrated images, videos, news, and maps directly into the main search results. This change was fueled by Google’s acquisition of YouTube and the development of Google Maps, realizing that a user’s query might be better answered by a video or a map than by a text article.

At the same time, Google introduced Personalized Search. By using a user’s search history and geographic location, Google could tailor results to the individual. If two people searched for “football,” a user in London might see results for soccer, while a user in Chicago would see results for the NFL. This marked the rise of Local SEO, making proximity and local relevance key ranking factors. It was the beginning of Google’s transition from a global library to a personal assistant.

The Panda Update (2011)

In February 2011, Google released an update that sent shockwaves through the digital publishing world: Panda. This update was specifically designed to target “content farms”—sites that produced vast quantities of low-quality, shallow content designed purely to rank for high-volume keywords without providing real answers.

The War on Thin Content

Panda focused on the user experience and the “usefulness” of the information. It targeted:

Thin Content: Pages with very little original information or extremely short word counts.

Duplicate Content: Sites that simply scraped or slightly reworded content from other sources to avoid copyright filters.

High Ad-to-Content Ratios: Sites where the content was buried under advertisements, making it difficult for users to read the actual article.

Sites like eHow and various article directories saw their traffic vanish overnight. The key lesson from Panda was clear: Quality content is essential. It was no longer enough to just have a page about a topic; that page had to provide genuine value to the reader. Panda was unique because it assigned a “quality score” to entire sites, meaning a few bad pages could drag down the rankings of a site’s better content.

The Penguin Update (2012)

While Panda addressed content quality, the Penguin Update in 2012 arrived to clean up the backlink profile of the web. Despite previous updates, many SEOs were still buying links or using automated software to create thousands of “spammy” backlinks on forums, directories, and low-quality blogs.

Cracking Down on Link Schemes

Penguin targeted specific manipulative behaviors:

Exact-Match Anchor Text: If 90% of a site’s backlinks used the exact same keyword (e.g., “best cheap car insurance”), it looked unnatural to the algorithm.

Paid Links: Links purchased specifically to pass authority, often found in “sponsored” sections or sidebars of unrelated sites.

Link Schemes: Private blog networks (PBNs) and guest posting for the sole purpose of link building rather than sharing information.

Penguin was unique because it functioned as a filter that ran periodically. If a site was caught, it couldn’t recover until the next time the filter ran—which could be months or even a year later. This led to the creation of the Disavow Tool, allowing webmasters to tell Google which links they wanted to be ignored. It solidified the “white-hat” approach as the only sustainable way to build a brand online.

The Hummingbird Update (2013)

In 2013, Google didn’t just update its algorithm; it replaced the engine entirely. Hummingbird was a total overhaul designed to handle the increasing complexity of search queries, especially as mobile voice search began to rise.

The Shift to Semantic Search

Hummingbird introduced Semantic Search. Instead of looking at words in isolation, Google started looking at the intent and context behind them. It sought to understand the relationship between words. For example, if you searched for “weather in Seattle,” Google understood you wanted a forecast, not just a page containing those three words.

This led to the categorization of search intent:

Informational: The user wants to learn something (“how to fix a sink”).

Navigational: The user wants to go to a specific site (“Facebook login”).

Transactional: The user wants to buy something (“iPhone 15 price”).

Keyword stuffing became officially obsolete. Hummingbird allowed Google to rank pages that didn’t even contain the specific keywords used in the query, provided the content was the best match for the user’s intent. This was the first step toward true natural language processing.

The Pigeon Update (2014)

Released in 2014, Pigeon focused on the local search ecosystem. It tied the local search algorithm more closely to the traditional web algorithm, improving how Google calculated distance and location relevance.

This update had a massive impact on local businesses. It prioritized “local citations” (mentions of a business name, address, and phone number across the web) and made Google Business Profiles and user reviews central to local rankings. For the first time, small local businesses had a clear path to competing with national brands within their specific geographic area. It also improved the integration of search results with Google Maps, providing a seamless transition between finding a store and getting directions to it.

Mobilegeddon (2015)

By 2015, the tipping point had been reached: more searches were happening on mobile devices than on desktops. Google responded with the Mobile-Friendly Update, colloquially known in the industry as “Mobilegeddon.” This update made mobile responsiveness a direct ranking factor.

If a website was difficult to read or navigate on a smartphone—small text, buttons too close together, or content wider than the screen—it was penalized in mobile search results. This forced the entire web toward responsive design and set the stage for “mobile-first indexing,” where Google would eventually use the mobile version of a site as the primary version for its index, regardless of how the desktop version performed.

RankBrain (2015)

Late in 2015, Google revealed RankBrain, a machine-learning-based component of the algorithm. This was a watershed moment: Google was no longer just following a set of rules written by humans; it was using AI to learn how users interacted with search results and adjusting itself in real-time.

Learning from Human Behavior

RankBrain’s primary job is to interpret ambiguous or never-before-seen queries (which make up about 15% of daily searches). It maps these queries to concepts it already understands. Furthermore, it looks at “user behavior signals” to determine if a result was successful:

Click-Through Rate (CTR): Do users click the result?

Dwell Time: Do they stay on the page for a long time, or bounce back to the search results immediately?

Pogo-sticking: Do users click multiple results in a row because they aren’t finding what they need?

RankBrain transformed Google from a static system into a dynamic one that could “understand” that a user searching for “the gray thing that eats peanuts” likely meant an elephant, even if the word “elephant” wasn’t in the query.

The Medic Update (2018)

In 2018, a major core update nicknamed “Medic” hit the health and wellness space particularly hard. This update brought the concepts of E-E-A-T (Experience, Expertise, Authoritativeness, and Trustworthiness) and YMYL (Your Money or Your Life) to the forefront.

The Importance of Authority

Google raised the bar for websites that could impact a person’s future happiness, health, financial stability, or safety. For a medical site, it was no longer enough to have good content; the content needed to be written by or reviewed by medical professionals. This update proved that for certain niches, the “who” behind the content is just as important as the content itself. This led to a massive cleanup of “fake news” and unsubstantiated health claims in search results.

The BERT Update (2019)

In 2019, Google introduced BERT (Bidirectional Encoder Representations from Transformers). While RankBrain helped interpret concepts, BERT was designed to understand the nuance of human language—specifically prepositions like “to,” “for,” and “with” that change the entire meaning of a sentence.

Consider the query: “2019 brazil traveler to usa need visa.” Before BERT, Google might ignore the word “to” and show results for Americans traveling to Brazil. BERT allowed Google to understand that the direction of the travel was the most important part of the query. This represented a massive leap in natural language understanding, making search feel more conversational and less like a “computer command.” It allowed Google to process words in relation to all the other words in a sentence, rather than one-by-one in order.

Core Web Vitals and Page Experience (2021)

By 2021, Google shifted its focus toward technical user experience (UX). The Page Experience Update introduced Core Web Vitals, a set of specific metrics that measure how users perceive the experience of interacting with a web page beyond its information content.

The Three Pillars of UX

Largest Contentful Paint (LCP): Measures loading performance. How fast does the main content of the page appear?

First Input Delay (FID) / Interaction to Next Paint (INP): Measures interactivity. How fast does the page respond when a user clicks a button or a link?

Cumulative Layout Shift (CLS): Measures visual stability. Does the content jump around as the page loads (often caused by ads or slow-loading images)?

This update signaled that a site could have great content and great links, but if it was slow or frustrating to use, it would not rank at the top. UX had officially become an SEO pillar.

The Helpful Content Update (2022)

As AI-generated content began to flood the internet, Google released the Helpful Content Update. This was a system-wide signal designed to reward content that provides a satisfying experience for visitors and penalize content written primarily for search engines.

The update encouraged “people-first content.” Google started looking for signs of real-world experience. For example, a product review from someone who has actually held the device is more “helpful” than a summary of specs written by an AI or a content writer who hasn’t used the product. This was a direct strike against “SEO-first” content that follows a keyword formula but lacks original insight or depth.

SpamBrain and AI-Based Spam Detection

To combat the rising tide of automated spam, Google deployed SpamBrain. This is an AI-powered spam-prevention system that can identify not only traditional keyword and link spam but also “scaled content abuse”—the practice of using automation to generate thousands of pages to capture long-tail traffic. SpamBrain allows Google to ignore spammy links and low-quality pages in real-time. It is constantly learning from new spam patterns, making it the most effective tool Google has ever had for keeping the index clean.

Google and Generative AI (2023–Present)

The most recent and profound shift in Google’s history is the integration of Generative AI. With the introduction of AI Overviews (formerly Search Generative Experience), Google is moving from providing links to providing direct answers.

Powered by the Gemini family of models, Google can now synthesize information from across the web into a single, cohesive response at the top of the search results. This “zero-click” search environment changes the game for publishers. SEO is no longer just about ranking #1; it is about being the source that the AI uses to generate its answer. This era represents the pinnacle of “intent-matching,” where the algorithm doesn’t just find a page—it interprets the entire web to solve the user’s problem instantly.

Impact on the Publishing Ecosystem

The rise of AI overviews has led to concerns about “zero-click” searches, where users get their answer on the Google results page and never click through to the source website. This forces marketers to rethink their strategy, focusing more on brand authority and “mention-worthiness” rather than just organic traffic. The future of SEO is increasingly about becoming an entity that the AI trusts as an authoritative source.

Major Themes Across Google’s Algorithm History

Looking back at nearly three decades of evolution, several clear themes emerge. The transition from the old way of searching to the modern era is characterized by a move away from technical tricks toward human-centric value.

| Old Google | Modern Google |

| Keywords: Exact word matching. | Search Intent: Understanding what the user wants. |

| Backlinks Only: Quantity of links mattered most. | Trust + Relevance + UX: Quality and context of authority. |

| Static Algorithms: Updates happened months apart. | AI & Machine Learning: Real-time, evolving systems. |

| Desktop-First: Sites designed for large screens. | Mobile-First: Speed and responsiveness on phones. |

| Ranking Pages: Finding the best document. | Answering Questions: Providing the best solution. |

The Evolution of the Search Interface

We have also seen the Search Engine Results Page (SERP) evolve from a simple list of links into a rich ecosystem. Rich snippets, knowledge panels, featured snippets, and video carousels have made the SERP an interactive experience. This has shifted the focus of SEO from “meta descriptions” to “structured data” (Schema markup), helping Google understand exactly what each part of a page represents.

Major Update Timeline

| Year | Update | Purpose |

| 1998 | PageRank | The original foundation based on link authority. |

| 2003 | Florida | The first major crackdown on keyword stuffing. |

| 2011 | Panda | Targeted thin, low-quality, and duplicate content. |

| 2012 | Penguin | Targeted manipulative and spammy link building. |

| 2013 | Hummingbird | Introduced semantic search and conversational intent. |

| 2015 | RankBrain | Integrated machine learning into the ranking process. |

| 2015 | Mobilegeddon | Prioritized mobile-friendly sites for smartphone users. |

| 2018 | Medic | Emphasized E-E-A-T and authority in sensitive niches. |

| 2019 | BERT | Improved natural language understanding of context. |

| 2021 | Core Web Vitals | Made technical user experience (speed/stability) a factor. |

| 2022 | Helpful Content | Penalized content made solely for SEO purposes. |

| 2023 | AI Overviews | Introduced generative AI to answer queries directly. |

Case Studies: The Winners and Losers

The history of Google is littered with the “ghosts” of sites that failed to adapt.

Panda Case Study: Content farms like Demand Media (eHow) saw their valuations plummet as Google stopped rewarding “how-to” articles that were 200 words long and lacked depth. They were forced to pivot to high-quality, expert-led editorial content to survive.

Penguin Case Study: Many e-commerce sites in the early 2010s used “private blog networks” to boost their rankings. When Penguin hit, many of these businesses lost 90% of their revenue overnight. Those who recovered had to spend years “cleaning” their link profiles using the Disavow Tool.

Mobilegeddon Case Study: Many legacy news organizations and government sites were slow to adopt responsive design. In 2015, they saw their mobile traffic drop significantly until they modernized their web infrastructure, proving that technical SEO is just as important as content.

Final Thoughts

The history of Google algorithms is a story of a relentless pursuit of quality. From the simple “link-counting” days of 1998 to the sophisticated AI-driven models of today, Google has consistently moved toward one goal: providing the most helpful answer as quickly as possible.

As we look toward the future, the trend is clear. SEO success is no longer about finding a “secret” trick or a technical loophole. Instead, it is about building a brand that demonstrates genuine Expertise, Experience, Authoritativeness, and Trustworthiness. The algorithms of the future will be even more adept at distinguishing between content created to please a machine and content created to help a human.

For those who create truly valuable content, keep their sites fast and accessible, and focus on the user’s needs, the evolution of Google’s algorithms isn’t a threat—it’s an opportunity. The machines have finally learned to value what humans value. In the end, the best way to optimize for an AI-driven search engine is to be undeniably, authentically useful. The future of search belongs not to the best “optimizer,” but to the best creator.

Frequently Asked Questions About Google Algorithm History

Adding a dedicated FAQ section is a strategic way to capture “long-tail” search queries—the specific, conversational questions that users type into search engines. These answers address common pain points and provide quick, authoritative summaries of complex updates.

What was the first Google algorithm update?

While Google made many minor adjustments in its early years, the Florida update in November 2003 is widely considered the first major “named” update that fundamentally changed the SEO industry. Before Florida, ranking was largely a matter of keyword density. This update introduced a more sophisticated way of analyzing on-page content, effectively ending the era of blatant keyword stuffing and “invisible” text.

How often does Google update its search algorithm?

Google updates its search algorithm thousands of times per year. While the majority of these are minor “incidental” changes that go unnoticed by the general public, Google typically releases several Core Updates annually. These core updates are significant shifts that can cause noticeable fluctuations in search rankings across the entire web.

What is the difference between Panda and Penguin updates?

The main difference lies in their targets: Google Panda (2011) was designed to combat low-quality content, such as thin articles, duplicate text, and content farms. Google Penguin (2012) was designed to combat link spam, specifically targeting websites that used manipulative backlink strategies, paid links, or private blog networks to artificially inflate their authority.

Why did my website traffic drop after a Google Core Update?

A traffic drop after a core update usually indicates that Google’s systems have reassessed how your content meets current quality standards. It does not necessarily mean your site is “penalized” in the traditional sense; rather, it often means other pages have been found to provide better value, expertise, or user experience. Recovery typically requires improving your site’s overall E-E-A-T (Experience, Expertise, Authoritativeness, and Trustworthiness).

What are Google’s Your Money or Your Life (YMYL) guidelines?

YMYL refers to “Your Money or Your Life” pages—content that could potentially impact a person’s future happiness, health, financial stability, or safety. Because the stakes are higher for these topics (such as medical advice, legal information, or financial planning), Google applies much stricter algorithmic standards to these sites, requiring higher levels of proven expertise and official documentation.

How does RankBrain affect SEO today?

RankBrain is a machine-learning component that helps Google process and understand ambiguous search queries. For SEO, this means that optimizing for a specific keyword is less important than optimizing for user intent. RankBrain looks at how users interact with your page; if people quickly leave your site to find a better answer elsewhere (pogo-sticking), RankBrain may lower your ranking for that specific query.

Is mobile-friendliness still a ranking factor?

Yes, mobile-friendliness is a critical ranking factor. Since the 2015 Mobilegeddon update and the subsequent move to Mobile-First Indexing, Google primarily uses the mobile version of a website’s content for indexing and ranking. If your site is not responsive or has a poor user experience on a smartphone, it is highly unlikely to rank on the first page, even for desktop searches.

What are Core Web Vitals and why do they matter?

Core Web Vitals are a set of specific technical metrics that Google uses to measure a user’s experience on a page. They focus on three areas:

LCP (Loading): How fast the largest element on the screen loads.

INP (Interactivity): How quickly the page responds to user inputs like clicks.

CLS (Visual Stability): Whether elements on the page shift around unexpectedly while loading. These matter because they provide a quantifiable way for Google to reward sites that are fast and frustration-free.

Can AI-generated content rank on Google?

Google’s official stance is that the use of AI is not against their guidelines, provided the content is high-quality, original, and helpful to users. However, content generated solely to manipulate search rankings without adding unique value is considered a violation of their spam policies. The focus should always be on “people-first” content rather than “search-engine-first” content.

What is the future of Google algorithms and SEO?

The future of Google algorithms is trending toward Generative AI and conversational search. With the integration of systems like Gemini and AI Overviews, Google is evolving into an “answer engine.” Success in the future of SEO will likely depend on being cited as a trusted source by these AI models, focusing on deep expertise and providing a flawless technical user experience.