Robots.txt for Beginners: A Simple Guide

When you launch a website, you are essentially opening a digital storefront to the world. However, the first “visitors” to your site aren’t usually humans; they are automated programs known as web crawlers. These crawlers browse your content to determine what your site is about and how it should appear in search results. But what if you have sections of your site that you don’t want the world to see—or more specifically, parts you don’t want search engines to waste their time on?

This is where the robots.txt file comes into play. At its core, a robots.txt file is a simple text file stored in your website’s root directory. It acts as a set of instructions for web robots, telling them which parts of your site they are allowed to visit and which parts are off-limits.

Read: Starting a Blog on a Budget: Essential Tips for Aspiring Bloggers

The history of this file dates back to 1994. Martijn Koster, a Dutch software engineer, proposed the Robots Exclusion Protocol after a crawler caused significant performance issues on his servers. He realized there needed to be a standardized way for webmasters to communicate with automated scripts. Since then, it has become a fundamental pillar of Search Engine Optimization (SEO).

Understanding robots.txt is crucial because it gives you control over your “crawl budget”—the amount of time and resources a search engine like Google spends on your site. If used correctly, it helps ensure that your most important pages are indexed and surfaced to users. If used incorrectly, it can accidentally hide your entire website from the internet. This guide is designed to take you from a total beginner to a confident user, providing a practical, step-by-step approach to managing your site’s relationship with the bots.

Read: How to Make Money Online Through The Internet

Understanding Web Crawlers and Robots

To understand why a robots.txt file is necessary, you first need to understand the “bots” it is meant to guide. A web crawler—often called a robot, bot, or spider—is a software program that systematically browses the World Wide Web.

How Search Engines Use Crawlers

Search engines like Google, Bing, and DuckDuckGo use these crawlers to build an index of the web. The process generally follows a three-step cycle:

Crawling: The bot discovers your website and follows links from page to page.

Indexing: The bot analyzes the content (text, images, and videos) and stores it in a massive database.

Ranking: When someone searches for a query, the search engine pulls the most relevant results from that index.

Crawling vs. Indexing

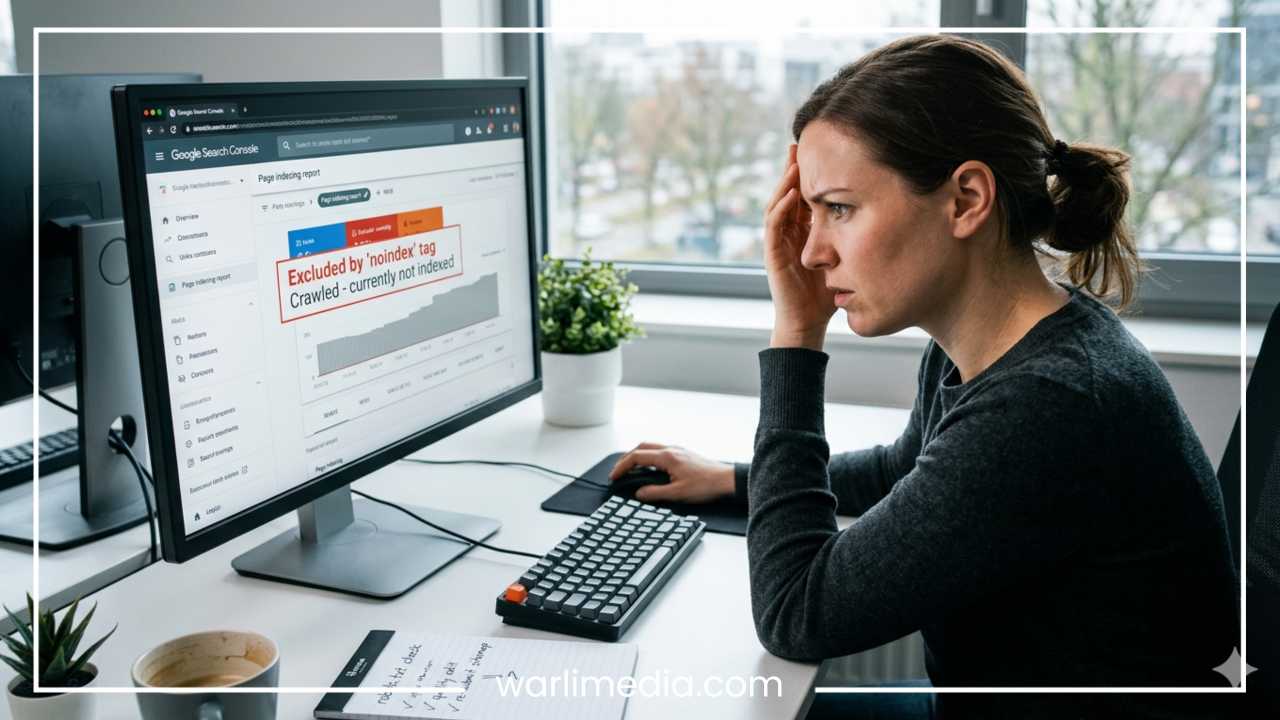

It is a common misconception that “blocking a page from being crawled” is the same as “preventing it from being indexed.”

Crawling is the act of the bot visiting the page to read its data.

Indexing is the act of that page appearing in search results.

If you block a page via robots.txt, a bot won’t crawl it, but if other websites link to that page, it might still show up in search results (though without a description). We will discuss how to handle this distinction later.

Common Bots

There are thousands of bots on the internet. Some are “good,” like Googlebot (Google), Bingbot (Bing), and Slurp (Yahoo), which help your site get traffic. Others are “bad” or “malicious” bots used for scraping content, finding security vulnerabilities, or spamming. While robots.txt is primarily designed for reputable search engines that choose to follow the rules, it serves as the first line of communication for any automated visitor reaching your server.

Read: How to Improve Adsense Revenue

What a Robots.txt File Is

Technically speaking, a robots.txt file is a plain text file that follows the Robots Exclusion Protocol. It is not a piece of code like HTML or JavaScript; it is a simple list of directives.

Location is Everything

For a robots.txt file to be effective, it must be placed in the root directory of your website host. This means it must be accessible at:

https://www.yourdomain.com/robots.txt

If you place it in a subdirectory, such as yourdomain.com/assets/robots.txt, search engines will never find it, and they will assume your site has no crawling restrictions.

The Basic Syntax

The file uses a very specific, case-sensitive syntax consisting of three main components:

User-agent: Identifies which bot the rule applies to.

Disallow: Tells the bot which URL or directory it cannot visit.

Allow: Tells the bot it can visit a specific page within a disallowed directory.

Sitemap: Provides the URL to your XML sitemap to help bots find all your pages.

A Simple Example

A standard robots.txt file might look like this:

User-agent: *

Disallow: /admin/

Allow: /admin/public-files/

Sitemap: https://www.yourdomain.com/sitemap.xml

In this example, the asterisk (*) means the rule applies to all bots. It tells them to stay out of the “admin” folder but permits them to see “public-files” inside that folder. Finally, it points them toward the sitemap for better navigation.

How Robots.txt Works

When a web crawler arrives at a website, the very first thing it does—before downloading a single image or reading a single line of text—is look for the robots.txt file.

Guidelines, Not Laws

It is vital to remember that robots.txt is a voluntary agreement. Respectable search engines like Google and Bing honor these instructions as a matter of policy. However, malicious bots—those looking to steal data or spread malware—will likely ignore your robots.txt entirely. Therefore, you should never use robots.txt as a security measure to hide sensitive information. If a page contains private data, use password protection or a “noindex” tag instead.

The SEO Implications

Using robots.txt incorrectly can lead to “Indexing without Crawling.” If Googlebot is blocked from a page, it cannot see the content. If that page has many backlinks, Google might still list it in search results because it knows the page exists, but the listing will look broken or empty.

Logic of Rules

Crawlers read the file from top to bottom. If there are conflicting rules, the most specific rule usually wins. For example, if you disallow /images/ but allow /images/logo.png, the bot will understand that the logo is the only exception to the rule.

Writing a Robots.txt File

Creating your first robots.txt file is a straightforward process. You don’t need expensive software; any plain text editor like Notepad (Windows) or TextEdit (Mac) will work.

Step 1: Define the User-agent

Every group of rules must start with a User-agent.

To target all bots:

User-agent: *To target Google specifically:

User-agent: Googlebot

Step 2: Set Your Disallows

Identify the parts of your site that don’t need to be in search engines. These typically include:

Administrative backends (e.g.,

/wp-admin/for WordPress).Temporary files or “staging” folders.

Internal search results pages (to avoid duplicate content issues).

Step 3: Add Your Sitemap

At the very bottom of the file, add the link to your XML sitemap. This helps crawlers discover your content faster.

Sitemap: https://example.com/sitemap_index.xml

Common Scenarios and Examples

Scenario A: Blocking Everything (Use with caution!)

If your site is still under construction and you want no one to find it yet:

User-agent: *

Disallow: /

Scenario B: Blocking a Specific Bot

If you want Google to see your site but you want to block a different bot, like “BadBot”:

User-agent: BadBot

Disallow: /

Scenario C: Allowing Only One Folder

If you want to hide the whole site except for your “blog” section:

User-agent: *

Disallow: /

Allow: /blog/

Common Mistakes and Pitfalls

Even experienced webmasters make mistakes with robots.txt. Because the file is so powerful, a tiny typo can have massive consequences.

The “Slash” Disaster

The forward slash (/) is the most powerful character in the file.

Disallow: /blocks the entire site.Disallow:(blank) allows the entire site.New users often accidentally include a slash where they didn’t intend to, effectively “de-indexing” their entire business overnight.

Blocking CSS and JS

In the past, webmasters would block folders containing CSS and JavaScript files to save crawl budget. However, modern search engines need to see these files to “render” your page and understand its layout. If Google cannot see your CSS, it might think your site is not mobile-friendly, which will hurt your rankings.

Using Robots.txt for Security

As mentioned, robots.txt is public. Anyone can go to yourdomain.com/robots.txt and see exactly which folders you are trying to hide. If you name a folder /secret-login-page/ and disallow it, you have just given hackers a roadmap to your most sensitive areas.

Case Sensitivity

Robots.txt is case-sensitive. If your folder is named /Downloads/ and you write Disallow: /downloads/, the bot may ignore the instruction and crawl the folder anyway.

Advanced Robots.txt Tips

Once you have mastered the basics, you can use more advanced techniques to fine-tune how bots interact with your server.

Wildcards and Pattern Matching

You can use the asterisk (*) as a wildcard for pattern matching.

Blocking all URLs with a query string:

Disallow: /*?*Blocking all files of a certain type (e.g., PDFs):

Disallow: /*.pdf$The dollar sign (

$) indicates the end of a URL, ensuring the rule only applies to files ending exactly in.pdf.

Crawl-delay

Some bots crawl too aggressively, which can slow down your server for human users. The Crawl-delay directive tells bots to wait a certain number of seconds between page requests.

User-agent: *

Crawl-delay: 10

Note: Googlebot does not support this directive. For Google, you must manage crawl rates within Google Search Console.

Handling Images and Files

If you have high-resolution images that you don’t want appearing in Google Image Search, you can block the specific directory:

User-agent: Googlebot-Image

Disallow: /high-res-photos/

Testing and Validating Your Robots.txt

You should never “guess” if your robots.txt file is working. A single error can lead to a drop in traffic.

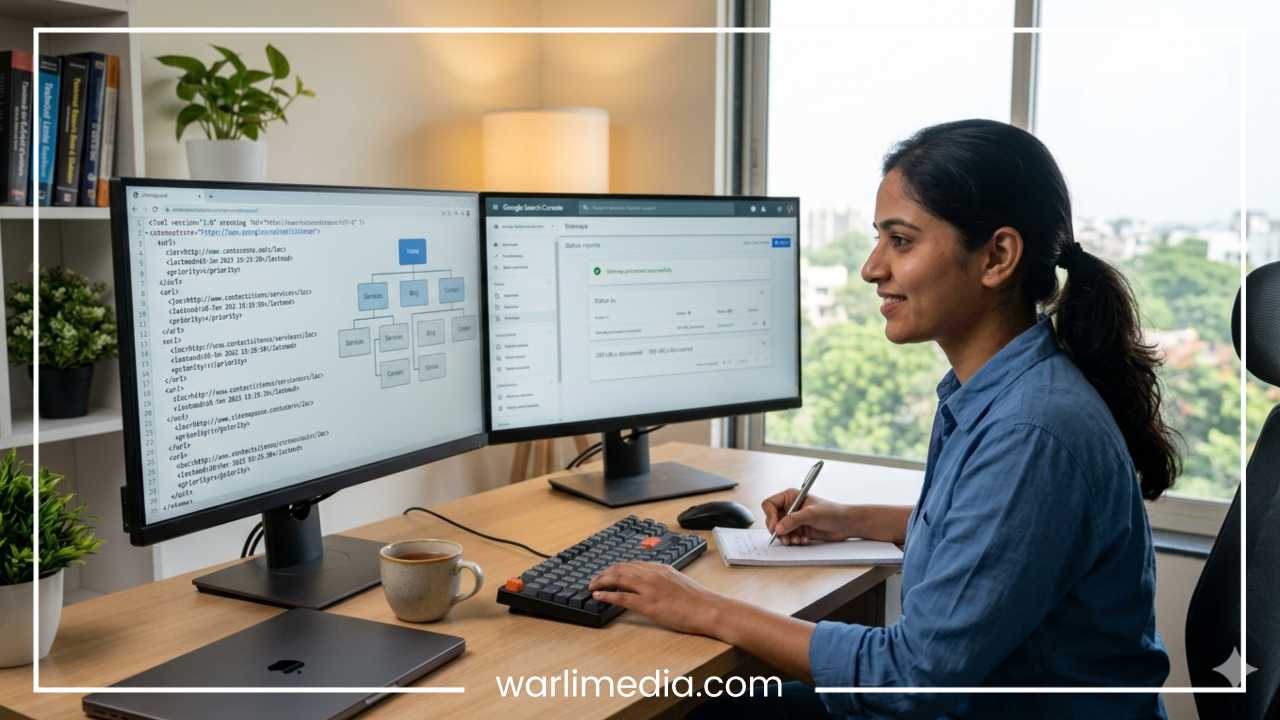

Google Search Console

The most reliable way to test your file is through Google Search Console. Within the “Crawl” or “Sitemaps” sections (depending on the current version of the interface), Google provides a “Robots.txt Tester.” You can paste your code into the tool, and it will tell you exactly which URLs are blocked and which are allowed.

Online Validation Tools

If you don’t have Search Console set up yet, there are several free online “Robots.txt Validators.” You simply enter your URL, and the tool checks for:

Syntax errors.

Logic conflicts.

Accessibility issues.

The Manual Check

Always perform a manual check. Open a private browser tab and navigate to yourdomain.com/robots.txt. If you see your text file appearing clearly, the server is serving it correctly. If you see a 404 error, the file is in the wrong place.

Case Studies and Examples

The E-commerce Filter Issue

A large clothing retailer once noticed their organic traffic was plummeting. Upon investigation, they found their robots.txt was blocking the /category/ folder. Because all their products were housed within category folders, Google dropped almost every product page from the search results. They fixed the file, but it took weeks for the traffic to return to previous levels.

The News Site Success

A major news organization used robots.txt to manage “Crawl Budget.” During a major world event, their server was struggling with the volume of bot traffic. By using the Disallow command on their massive archive of stories from twenty years ago, they forced Googlebot to focus only on “Breaking News” sections. This resulted in their news appearing in search results much faster than their competitors.

Lessons Learned

The biggest lesson from these cases is that robots.txt should be minimalist. Only block what is absolutely necessary. It is far safer to allow too much than to accidentally block your source of income.

Final Thoughts

The robots.txt file is a small but mighty part of your website’s infrastructure. While it may seem intimidating at first, it follows a simple logic: it is a conversation between you and the search engines.

Key Takeaways:

Keep it simple: Most small websites only need to block their admin folders and link to their sitemap.

Location matters: Ensure the file is in the root directory.

Test before you go live: Use tools like Google Search Console to verify your rules.

Not a security tool: Use passwords for sensitive data, not

robots.txt.Monitor regularly: Whenever you add a new section to your site or change your URL structure, review your

robots.txtto ensure everything is still working as intended.

By following this guide, you have moved beyond the “beginner” phase. You now have the power to control how the world’s most powerful search engines interact with your digital home. Handle that power with care, and your SEO will thank you.